Associative Memories via Predictive Coding.

Predictive coding has been proposed as a general theory to describe how the entire brain works. It assumes that the brain is constantly making predictions, for example about sensations it receives. Predictive coding could also describe memory, but this has not been clearly demonstrated. Here, we show with computer simulations that predictive coding networks can work as effective associative memories, and that memory retrieval could be conceived as “predicting” missing pieces of information on the basis of cues provided, like predicting memorised songs from the first few notes.

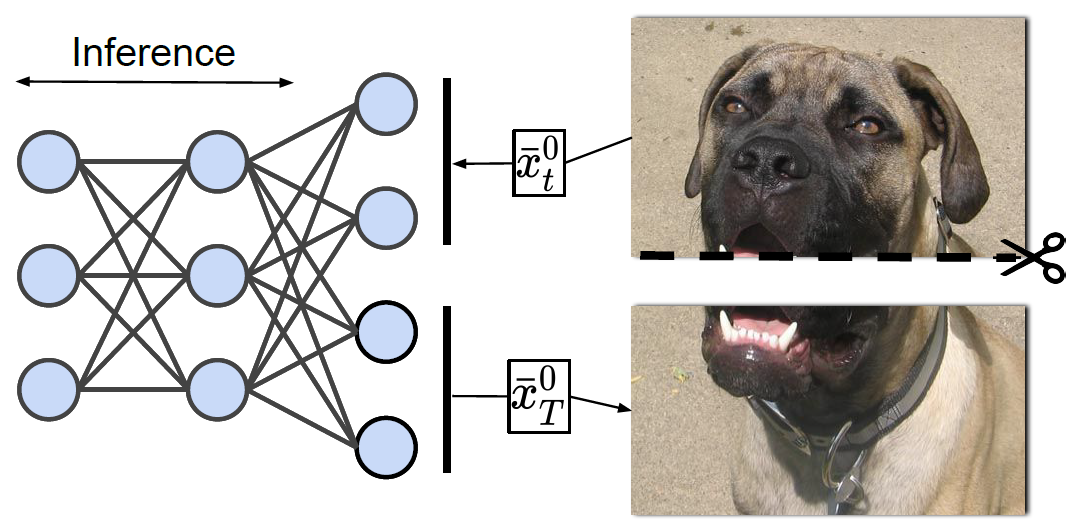

Associative memories in the brain receive and store patterns of activity registered by the sensory neurons, and are able to retrieve them when necessary. Due to their importance in human intelligence, computational models of associative memories have been developed for several decades now. In this paper, we present a novel neural model for realizing associative memories, which is based on a hierarchical generative network that receives external stimuli via sensory neurons. It is trained using predictive coding, an error-based learning algorithm inspired by information processing in the cortex. To test the model's capabilities, we perform multiple retrieval experiments from both corrupted and incomplete data points. In an extensive comparison, we show that this new model outperforms in retrieval accuracy and robustness popular associative memory models, such as autoencoders trained via backpropagation, and modern Hopfield networks. In particular, in completing partial data points, our model achieves remarkable results on natural image datasets, such as ImageNet, with a surprisingly high accuracy, even when only a tiny fraction of pixels of the original images is presented. Our model provides a plausible framework to study learning and retrieval of memories in the brain, as it closely mimics the behavior of the hippocampus as a memory index and generative model.

2023. PLoS Comput Biol, 19(4)e1010719.

2023. Adv Neural Inf Process Syst, 36:44341-44355.

2017.J Math Psychol, 76(Pt B):198-211.