A Stable, Fast, and Fully Automatic Learning Algorithm for Predictive Coding Networks

Predictive coding is an influential mathematical model describing computations and learning in part of the brain called the cortex. However, existing methods for simulations of this model have been slow. This paper describes how this model can simulated in a much faster way.

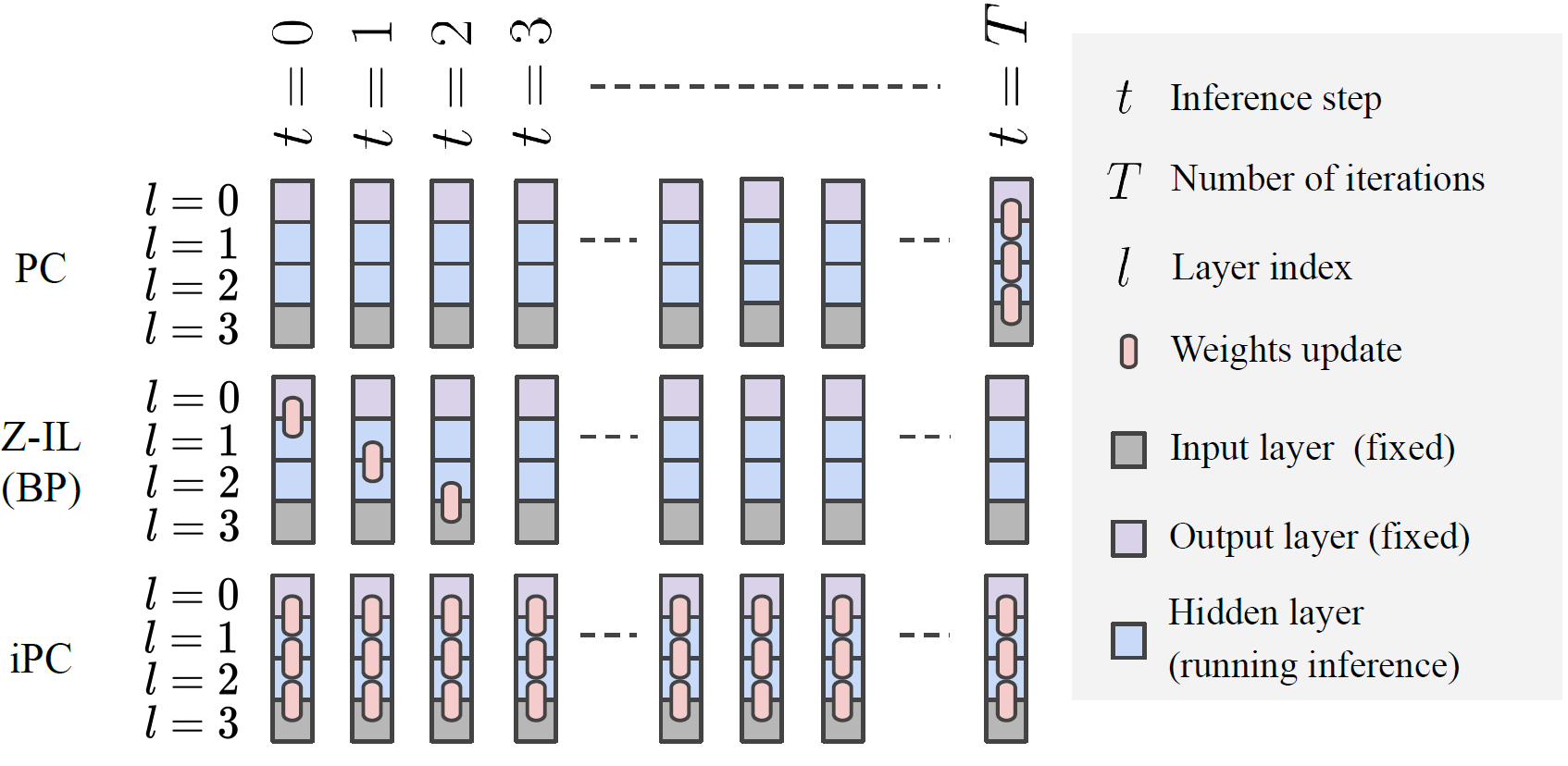

Predictive coding networks are neuroscience-inspired models with roots in both Bayesian statistics and neuroscience. Training such models, however, is quite inefficient and unstable. In this work, we show how by simply changing the temporal scheduling of the update rule for the synaptic weights leads to an algorithm that is much more efficient and stable than the original one, and has theoretical guarantees in terms of convergence. The proposed algorithm, that we call incremental predictive coding (iPC) is also more biologically plausible than the original one, as it it fully automatic. In an extensive set of experiments, we show that iPC constantly performs better than the original formulation on a large number of benchmarks for image classification, as well as for the training of both conditional and masked language models, in terms of test accuracy, efficiency, and convergence with respect to a large set of hyperparameters.

2017.J Math Psychol, 76(Pt B):198-211.

2023. PLoS Comput Biol, 19(4)e1010719.